{

"cells": [

{

"cell_type": "markdown",

"metadata": {},

"source": [

"- In [part1](https://hrngok.github.io/posts/bikeshare/) and [part2](https://hrngok.github.io/posts/bikeshare%20part2/) of our analyse on `bikeshare` dataset, we did explanatory analysis (EDA) and used Linear Regression for our prediction and Kaggle submission\n",

"- We will try to improve our prediction score on the same dataset by more complex tree-based models\n",

"- Before diving directly into the project maybe it would be better to remind the tree based models\n",

"- So in this post we will review the trees and we will apply these models to our dataset in the next post\n",

"\n",

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Trees vs Linear Models\n",

"\n",

"- With Linear Regression we need to create new features to represent the **features interactions**\n",

"\n",

"- Feature interactions occurs when the effects of features are combined\n",

"\n",

"- For example, the **multiplication of two features** can have more predictive power on the target than the individual features\n",

"\n",

"- With linear models, features must be **linearly correlated** (having non-zero correlation) to the target.\n",

"- Trees can learn the **non-linear interactions** between the features and the target\n",

"\n",

">For example, what if the $target$ goes up when \n",

">- the $feature1$ is going up, and \n",

"- the $feature2$ is going down?\n",

"

\n",

"

\n",

">- One way to capture those interactions is either to **multiply** the features, or to use algorithms (like trees) that can handle non-linearity.\n",

"\n",

"- Linear regression is parametric i.e we don't need to keep the training data after we get the model parameters (**w** (weights), **b** (intercepts))\n",

"\n",

"- Parametric and nonparametric models differ in the way parameters of the model are fixed or data needed each time to determine the parameters\n",

"\n",

"- Trees are considered as non-parametric models.\n",

"\n",

"- Trees partitions the features space horizontally or vertically thus they create axis-parallel hyper rectangles whereas linear models can divide the space in any direction or orientation.\n",

"\n",

"- This is a direct consequence of the fact that trees select a single feature at a time whereas linear models use a weighted combination of all features.\n",

"\n",

"- In principle, a tree can cut up the instance space arbitrarily finely into very small regions.\n",

"- A linear model places only a single decision surface through the entire space. It has freedom in the orientation of the surface, but it is limited to a single division into two segments.\n",

"\n",

"- This is a direct consequence of there being a single (linear) equation that uses all of the variables, and must fit the entire data space"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"#### An hyperplane by a linear model\n",

"\n",

"\n",

"An hyperplane created by a linear model divides the feature space into two\n",

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"#### Trees cut the space horizontally and vertically"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

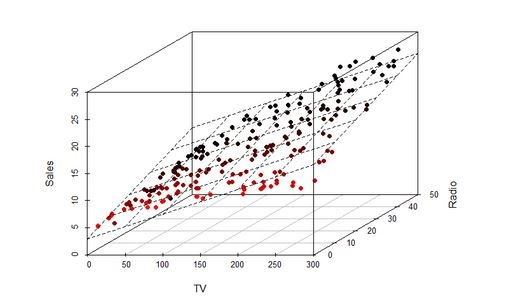

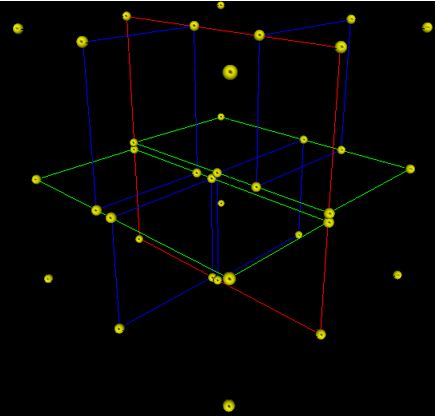

"\n",

"\n",

"> - A 3-dimensional (with 3 features) tree. \n",

">- The first split (by the red vertical plane) cuts the space into 2 regions then, \n",

">- each of the two regions are split again (by the green horizontal planes) into 2 regions. \n",

">- Finally, those 4 regions are split (by the 4 blue vertical planes) into 2 regions. \n",

">- Since there is no more splitting, finally the whole space is split into 8 regions"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Advantages of Decision Trees:\n",

"\n",

"- Simple to interpret.\n",

"- Trees can be visualised.\n",

"- Requires little data preparation. \n",

"- Other techniques often require \n",

" - data normalization, \n",

" - dummy variables need to be created \n",

" \n",

" \n",

"- The cost of using the tree (i.e., predicting data) is **logarithmic** in the number of data points used to train the tree.\n",

" \n",

"- Able to handle both numerical and categorical data (Sklearn only support numerical data at the moment)\n",

"- They can find non-linear relationships between features and target variables, as well as interactions between features. \n",

"- **Quadratic, exponential, cyclical**, and other relationships can all be revealed, as long as we have enough data to support all of the necessary cuts. \n",

"\n",

"- Decision trees can also find **non-smooth behaviors, sudden jumps**, and **peaks**, that other models like linear regression or artificial neural networks can hide. \n",

"\n",

"- Decision tree can easily identify the most important(predictive) feautes and relation between two or more features. \n",

"- With the help of decision trees, we can create new features that has better power to predict target variable. \n",

"\n",

"\n",

"## Disadvantages of Decision Trees :\n",

"\n",

"- Decision-tree learners can create **over-complex trees** that do not generalise the data well. This is called overfitting. \n",

"- Setting constraints such as\n",

" - the minimum number of samples required at a leaf node or\n",

" - the maximum depth of the tree and\n",

" - pruning (not currently supported by Sklearn) are necessary to avoid this problem\n",

" \n",

"\n",

"- Decision trees can be unstable because small variations in the data might result in a completely different tree being generated. This problem is mitigated by using decision trees within an **ensemble**. \n",

"

\n",

"

\n",

"\n",

"- Decision tree learners create biased trees if some classes dominate. It is therefore recommended to **balance the dataset** prior to fitting with the decision tree.\n",

"

\n",

"

\n",

"\n",

"- Practical decision-tree learning algorithms are based on heuristic algorithms such as the **greedy algorithm** where **locally optimal decisions** are made at each node. Such algorithms cannot guarantee to return the globally optimal decision tree. \n",

"- This can be mitigated by training multiple trees in an ensemble learner, where the **features and samples are randomly sampled with replacement**.\n",

"

\n",

"

\n",

"\n",

"- Tree models can only interpolate but not extrapolate (the same is true for random forests and tree boosting). That is, a decision tree makes **constant prediction** for the objects that **lie beyond the bounding box** set by the training set in the feature space. \n",

"\n",

"\n",

"\n",

"- In principle, you can approximate anything with decision trees but in practice you can't approximate very well. Still decision trees are very efficient because in practice the feature spaces are high dimensional that is data is very sparse"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

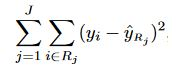

"## How a Regression Tree Splits the Feature Space?\n",

"\n",

"- Trees start testing features from the ones that potentially most quickly lead to a conclusion i.e most predictive or important\n",

"\n",

"- In each node trees test a feature\n",

"- Order of features matters\n",

"- In a step, for each feature the loss function (accuracy in case of classification) on the target variables calculated and the feature who provides the least loss is selected as the split feature\n",

"\n",

"- If the features are continuous, like temperature in our example, internal nodes may test the value against a **treshold**\n",

"- Everytime tree ask `Feature1>=something` or `Feature2>=something` results in two splits of the feature space horizontally or vertically\n",

"\n",

"- Decision trees are nested **if-then** staments\n",

"\n",

"- They predict the value of a target variable by learning simple decision rules inferred from the features.\n",

"\n",

"- For instance, in our dataset, decision trees learn from data with a set of **if-then-else** decision rules like if the `\"hour\"` is later than `5h` and earlier than `6h` else if `temperature` is higher than `15C` then..\n",

"\n",

"- The deeper the tree, the more complex the decision rules and the fitter the model.\n",

"\n",

"## Steps of Splitting Process\n",

"\n",

"Lets denote our features (like `temperature, humidity` etc) as $X_1, X_2,...,X_p$\n",

"\n",

"For the split process there are 2 steps:\n",

"\n",

"1. We divide the feature space (the set of possible values for features) into **J** distinct and non-overlapping regions: $R_1, R_2,...,R_J$\n",

"

\n",

"\n",

"2. When we make any prediction for a sample, we check in which region(subset) our sample falls. \n",

" - Say it is the region $R_j$. \n",

" - Then the **mean** of the target values of the training samples in $R_j$ will be the predicted value of the given sample.\n",

"\n",

"For instance, suppose that \n",

"- in Step 1 we obtain 2 regions, $R_1$ and $R_2$, and \n",

"- target mean of the training samples in the first region is $10$, while \n",

"- target mean of the training samples in the second region is $20$. \n",

"- Then, if a new sample falls in $R_1$, we will predict a value of $10$ for the target value of that sample, and if it falls in $R_2$ we will predict a value of $20$.\n",

"\n",

"- For regression trees the future importance is measured by how much each feature reduce the variance when they split the data\n",

"\n",

"### How do we construct the regions $R1,...,RJ $ ?\n",

"\n",

"We divide the space into high-dimensional rectangles, or boxes. The goal is to find boxes $R_1,...,R_J$ that minimize the\n",

"- **Mean Squared Error**, which minimizes the **L2 error** using **mean** values of the regions, or \n",

"- **Mean Absolute Error**, which minimizes the **L1 error** using **median** values of the regions\n",

"\n",

"`Mean Squared Error:`\n",

"\n",

"\n",

"\n",

"where $ŶR_j$ is the target mean for the training observations within the $j th$ box and the **$Y_i$** is the actual target value of the test observation\n",

"\n",

"**Note:** \n",

"- As seen above, regression tree split depends on the mean of the targets, since the mean is severly affected by outliers, the regression tree will suffer from outliers.

\n",

"\n",

"\n",

"- There are two main approaches to solve this problem: \n",

" - either removing the outliers or \n",

" - building the decision tree algorithm that makes splits based on the median instead of the mean, as the median is not affected by outliers. "

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### Recursive Binary Splitting\n",

"For computational feasiblity, we take a top-down, greedy approach that is known as **recursive binary splitting**. \n",

"\n",

"The recursive binary splitting approach is top-down because\n",

"- it begins at the top of the tree (at which **point all observations belong to a single region**) and then \n",

"- successively splits the features space; each split is indicated via 2 new branches further down on the tree. \n",

"\n",

"It is greedy because \n",

"- at each step of the tree-building process, the best split is made at that particular step, rather than \n",

"- looking ahead and picking a split that will lead to a better tree in some future step"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"In order to perform recursive binary splitting, \n",

"- we first select the feature $X_j$ (jth feature) and the cutpoint $s$ such that splitting the feature space into two regions where $X_j< s$ and $X_j≥s$ leads to the greatest possible reduction in Mean Squared Error. \n",

"\n",

"\n",

"That is, \n",

"- we consider **all features** $X_1,...,X_p$, and **all possible values of the cutpoint** $s$ for each of the features, and then \n",

"- choose the feature and cutpoint such that the resulting tree has the lowest mean squared error. \n",

"\n",

"In greater detail, \n",

"- **for any** $j$ (indice of the features) and $s$ (cutting point), we define \n",

"- a half-plane $R_1(j, s)$ where $X_j\n",

"\n",

"\n",

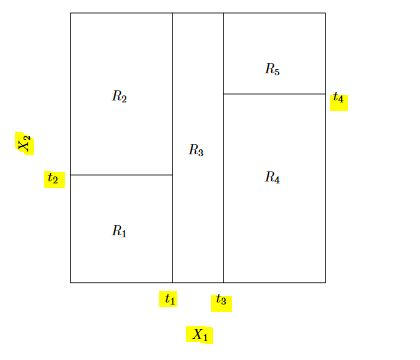

"A 5-region example of this approach is shown in below. The output of recursive binary splitting on a 2-features ($X_1, X_2$) example.\n",

"\n",

"\n",

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

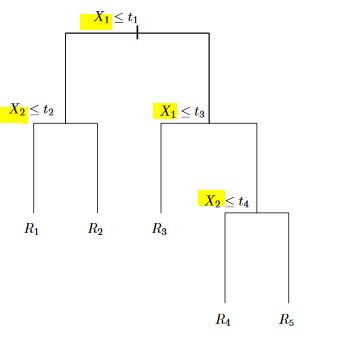

"A tree corresponding to the partition in the above figure.\n",

"- First we split the feature space into 2 regions by $X_1>t_1$ and $X_1<=t_1$ and continue to partitioning the new regions\n",

"\n",

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

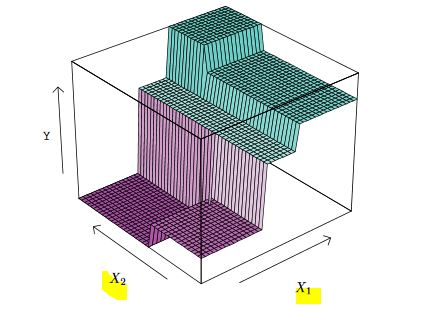

"A perspective plot of the prediction surface corresponding to the tree above.\n",

"\n",

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

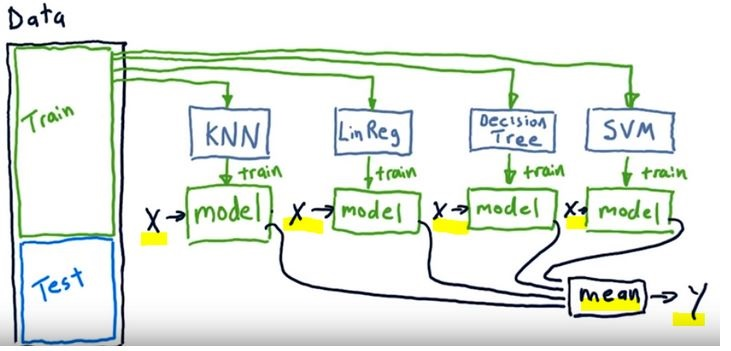

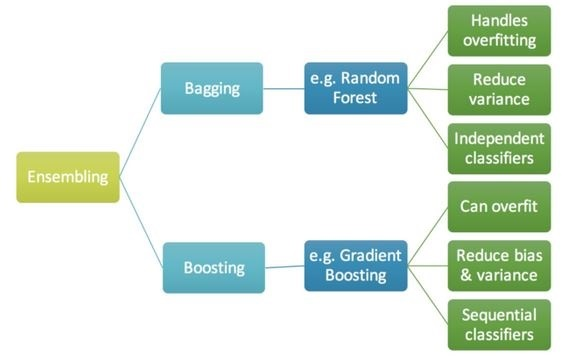

"# Ensemble Methods\n",

"\n",

"- Can single learners be combined in order to make a stronger learner?\n",

"- The idea behind the ensemble learners is to use the wisdom of crowd by assembling together several algorithms or models in order to improve generalizability / robustness over a single estimator\n",

"- Ensemble models are meta-models that aggregates the predictions of individual models based on specific formulas.\n",

"\n",

"\n",

"\n",

"- In the above image we see a tamplate of an ensemble model\n",

"- We train single learners like KNN, Decision Tree, SVM etc with the same data (**X**)\n",

"- Then we combine all the outcomes (**y**) of the single learners. In case of regression we take the mean of the all the predictions\n",

"- For evaluation we can use the mean of the predictions for testing with the our test data\n",

"\n",

"### Why ensembles might be better?\n",

"- Ensemble methods does not overfit (less variance) as any of single learners\n",

"- Ensemle learners have less bias than each of the single learners\n",

"- If we combine several models of different types, we can avoid being biased by one approach.\n",

"- This typically results in less overfitting, and thus better predictions in the long run, especially on unseen data.\n",

"\n",

"We can classify ensembe methods as **averaging** and **boosting** methods\n",

"- In averaging methods, we build several estimators independently and then to average their predictions. \n",

"- On average, the combined estimator is usually better than any of the single base estimator because its variance is reduced.\n",

"- Examples: Bagging methods, Forests of randomized trees etc\n",

"\n",

"- In boosting methods, we build base estimators sequentially and one tries to reduce the bias of the preceding estimator (boosting the preceding) \n",

"- Examples: AdaBoost, Gradient Tree Boosting etc"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

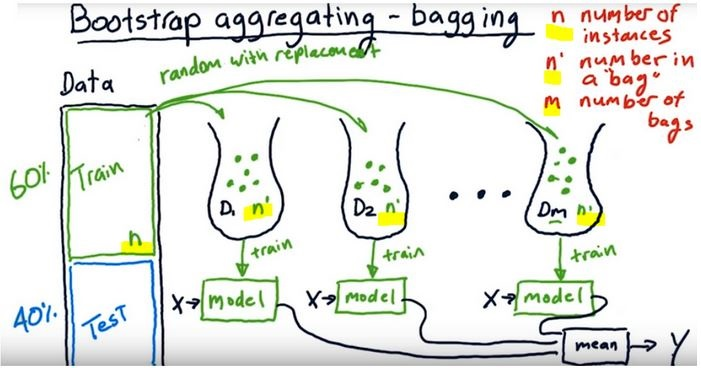

"## Bootstrap Aggregating- Bagging\n",

"\n",

"A way of building ensemble learners is \n",

"- using the same algorithm (one kind) but \n",

"- training each learner on a different set of data\n",

"- Like in the picture below we create bags of data by randomly subsetting with replacement (from the original traning dataset, bootstrap method)\n",

"- Number of instances in the original training dataset should be equal to the number of instances in the random data bags (n’ = n). \n",

"- Because the training data is sampled with replacement, about 60% of the instances in each bag are unique.\n",

" \n",

"For example a Random Forest algorithm can train 100 Decision Trees on different random subsets of the original training data.\n",

"\n",

"\n",

"\n",

"\n",

"- As depicted here the single models work independently i.e models run in parallel\n",

"- As understood from the name we bootstrap the data and aggregate all the predictions of single learners\n",

"\n",

"- As they provide a way to reduce overfitting, bagging methods work best with strong and complex models (`e.g., fully developed decision trees`)"

]

},

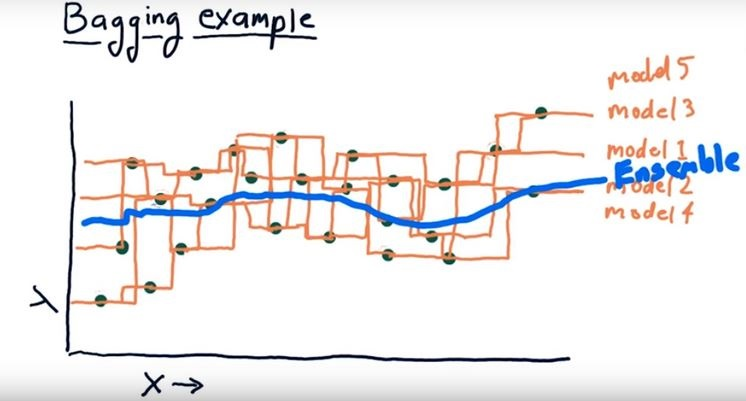

{

"cell_type": "markdown",

"metadata": {},

"source": [

"\n",

"\n",

"- When a new instance is fed to the ensemble, each single model makes it's prediction and the meta model collects the predictions and make the final decision either by taking the mean (regression) or voting (classification)\n",

"\n",

"\n",

"- In the image even though single models provide different outcomes taking the average of all the single models by an ensemble model smoothes the outcome"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

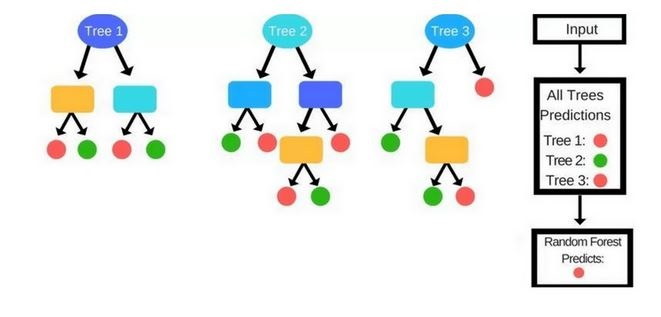

"## Forest of Randomized Trees (Random Forest)\n",

"\n",

"- It is a special case of ensemble learning\n",

"- The base learners are Decision Trees\n",

"- Training and prediction tasks are parallelized, because the individual trees are entirely independent of each other.\n",

"- Each estimator is trained on a different bootstrap sample having the same size as the training set\n",

"- Random Forest introduces further randomization in the training of individual trees at the feature selection level\n",

"\n",

"### Randomization in Random Forests\n",

"- At the data selection level: The single estimators (trees) select the data randomly by bootstrap aggregation\n",

"- At the feature selection level: Each tree, instead of searching greedily for the best feature to create branches, it randomly samples the features without replacement\n",

"\n",

"### Training Process of Random Forests\n",

"1. Randomly select “d” features from total “m” features where d << m\n",

"2. Among the “d” features, choose the best split point like in the decision trees (by information gain)\n",

"3. Split the node into child nodes using the best split\n",

"4. Repeat 1 to 3 steps without using the features used before\n",

"5. Build forest by repeating the steps from the beginning \n",

"\n",

"\n",

"\n",

"\n",

"### Prediction Process\n",

"- In a Decision Tree a new instance (test sample) goes from the root node to the bottom until it falls in a leaf node. \n",

"- In the Random Forests algorithm, each new data point goes through the same process, but now it visits all the different trees in the ensemble, which were grown using random samples of both training data and features. \n",

"\n",

"- For Classification problems, it uses the mode or most frequent class predicted by the individual trees (also known as a majority vote), whereas for Regression tasks, it uses the average prediction of each tree."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Boosting"

]

},

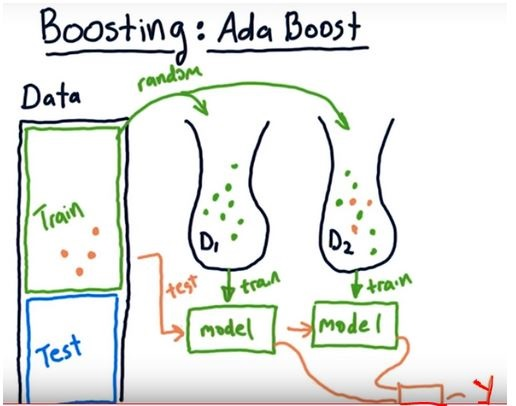

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Boosting \n",

"- is slightly different than bagging\n",

"- works squentially i.e each single learner in the ensemble model is dependent \n",

"- can track the model who failed the accurate prediction and focus on the areas that system does not perform well\n",

"- works iteratively (we massage the data and construct better and better model)\n",

"\n",

"In boosting each predictor tries to correct its predecessor.\n",

"\n",

"### Training Process of Boosting\n",

"- Initially all the data points were weighted equally ie they have equal chances to be selected as as input data\n",

"- In each boosting round\n",

" - we find the worst performing single learner with the weighted training errors\n",

" - we then raise the weigths of the training data points which are mispredicted by the current learner.\n",

" - each predictor is assigned a coefficient α that weighs its contribution to the final prediction of the ensemble\n",

" - α depends on the predictor's training error\n",

" \n",

"- We compute the final prediction as a linear combination of all the learners with the weights of the learners(α) which depends on the prediction performance of them\n",

"\n",

"- The way of re-weighting and combining the learners depends on the boosting type like Adaboost, Xgboost etc\n",

"- Boosting methods usually work best with weak models (e.g., `shallow decision trees`).\n",

"\n",

"\n",

"\n",

"In the image we see Adaboost (stands for Adaptive Boosting) template. \n",

"- The orange data points are the ones that could not well predicted by the first learner \n",

"- When the we choose data for the next learner (next bag of data), we select randomly but different from the bagging method all the data points will be weighted according to the wrong predicted points. Thus, the wrong predicted samples will have more chance to be selected as an input for the next predictor\n",

"- After choosing the second dataset(input for the second predictor) we test the overall performance of the bags and continue to choose data bag for the third learner regarding the poor predicted points"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

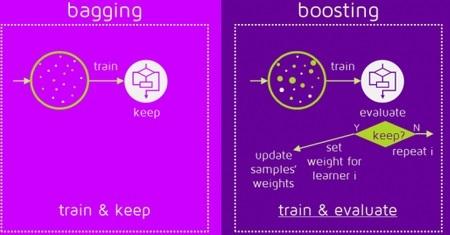

"Here is an image summarizing the way sampling for single learner, bagging and boosting\n",

"\n",

"- In single learner the model use the training set directly \n",

"- In bagging, each learner randomly chooses samples by replacement\n",

"- In boosting, each learner chooses samples from a weighted samples set\n",

"\n",

"\n",

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"- Boosting and bagging are not new algorithms\n",

"- They are meta algorithms that wrap the single learners\n",

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"This image displays the way of training data in bagging and boosting\n",

"\n",

"\n",

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Sources:

\n",

"https://www-bcf.usc.edu/~gareth/ISL/ISLR%20Seventh%20Printing.pdf#page=31

\n",

"https://scikit-learn.org/stable/modules/tree.html#classification

\n",

"https://classroom.udacity.com/courses/ud501/lessons/4802710867/concepts/47973133930923

\n",

"https://quantdare.com/what-is-the-difference-between-bagging-and-boosting/"

]

}

],

"metadata": {

"kernelspec": {

"display_name": "Python 3",

"language": "python",

"name": "python3"

},

"language_info": {

"codemirror_mode": {

"name": "ipython",

"version": 3

},

"file_extension": ".py",

"mimetype": "text/x-python",

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

"version": "3.6.6"

},

"nikola": {

"category": "",

"date": "2018-11-28 22:08:04 UTC+02:00",

"description": "",

"link": "",

"slug": "tree-based models",

"tags": "tree based models, decision trees, random forest, bagging, boosting",

"title": "Tree-based Models",

"type": "text"

}

},

"nbformat": 4,

"nbformat_minor": 2

}